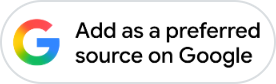

Anthropic has accused three AI laboratories, DeepSeek, Moonshot AI and MiniMax, of running industrial-scale campaigns to unlawfully extract capabilities from its Claude models, terming it a growing threat to AI safety and national security.

The company said the three labs generated more than 16 million interactions with Claude through roughly 24,000 fraudulent accounts, allegedly breaching its terms of service and regional access restrictions. Anthropic said the activity was aimed at “distillation”, a training technique in which a smaller or weaker model learns from the outputs of a more capable system.

Why Distillation Matters

Distillation is widely used across the AI industry for legitimate purposes, including the creation of smaller, cheaper and faster versions of in-house models. However, Anthropic said the same technique could be misused by competitors to copy advanced capabilities at a fraction of the time and cost required to build them independently.

Anthropic warned that illicitly distilled models may not retain important safeguards designed to prevent misuse, including protections related to bioweapons and malicious cyber activity. It said such capabilities could then be integrated into military, intelligence or surveillance systems, with risks multiplying further if those models were open-sourced.

Anthropic

Photo: NDTV Profit

Export Controls And National Security Concerns

Anthropic also argued that large-scale distillation weakens the intended impact of US export controls by allowing foreign labs to narrow the capability gap with American AI firms through extraction rather than independent innovation.

The company said this can create a misleading impression that export controls are ineffective, when in fact some advances may depend significantly on capabilities taken from US frontier models and on access to advanced chips to perform extraction at scale.

What Anthropic Says It Found

According to Anthropic, the three campaigns used a similar playbook involving proxy services, fraudulent accounts and coordinated prompting to evade detection while extracting high-value capabilities such as agentic reasoning, tool use and coding.

Anthropic said it attributed the campaigns with "high confidence" using IP address correlation, request metadata, infrastructure indicators and, in some cases, corroboration from industry partners.

ALSO READ: One Blog Post, $10 Billion Wipeout: How Anthropic's Announcement Eroded Cybersecurity Stocks

DeepSeek, Moonshot And MiniMax In Focus

Anthropic alleged DeepSeek's campaign involved more than 150,000 exchanges and included attempts to extract reasoning behaviour and produce censorship-safe alternatives to politically sensitive prompts.

Moonshot AI allegedly conducted more than 3.4 million exchanges targeting coding, agentic reasoning, tool use, computer-use agents and computer vision.

MiniMax, Anthropic said, was linked to more than 13 million exchanges focused on agentic coding and tool orchestration, and was observed rapidly shifting traffic to a newly released Claude model during an active campaign.

How Anthropic Is Responding

Anthropic said it is expanding detection systems, behavioural fingerprinting and coordinated-account monitoring, while strengthening verification for account pathways it says are frequently abused. The company also said it is sharing technical indicators with other AI firms, cloud providers and relevant authorities, and is developing product, API and model-level countermeasures. It added that no single company can address the threat alone and called for coordinated action across industry and policymakers.

ALSO READ: All About Anthropic's Claude Code Security — AI Tool That Sent Cybersecurity Stocks Reeling

Essential Business Intelligence, Continuous LIVE TV, Sharp Market Insights, Practical Personal Finance Advice and Latest Stories — On NDTV Profit.