This news article was not written by AI. No, seriously.

Honestly, when asked to write on the new chatbot taking the internet by storm, letting AI piece together an article about itself seemed logical. This writer, however, turned to Twitter and Google for research. Turns out, the best source was the chatbot itself.

ChatGPT, which generates human-like text in real time, is the latest addition to OpenAI Inc.'s suite of machine learning-driven language models. It is specifically designed to generate text in a conversational manner, making it ideal for applications such as chatbots and virtual assistants.

According to OpenAI, ChatGPT—or Chat Generative Pretrained Transformer—was trained on a massive dataset of human conversation, allowing it to learn complexities of natural language. That allows it to generate text that is coherent and fluent, making it difficult to distinguish from text written by a human.

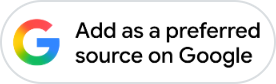

Sample this:

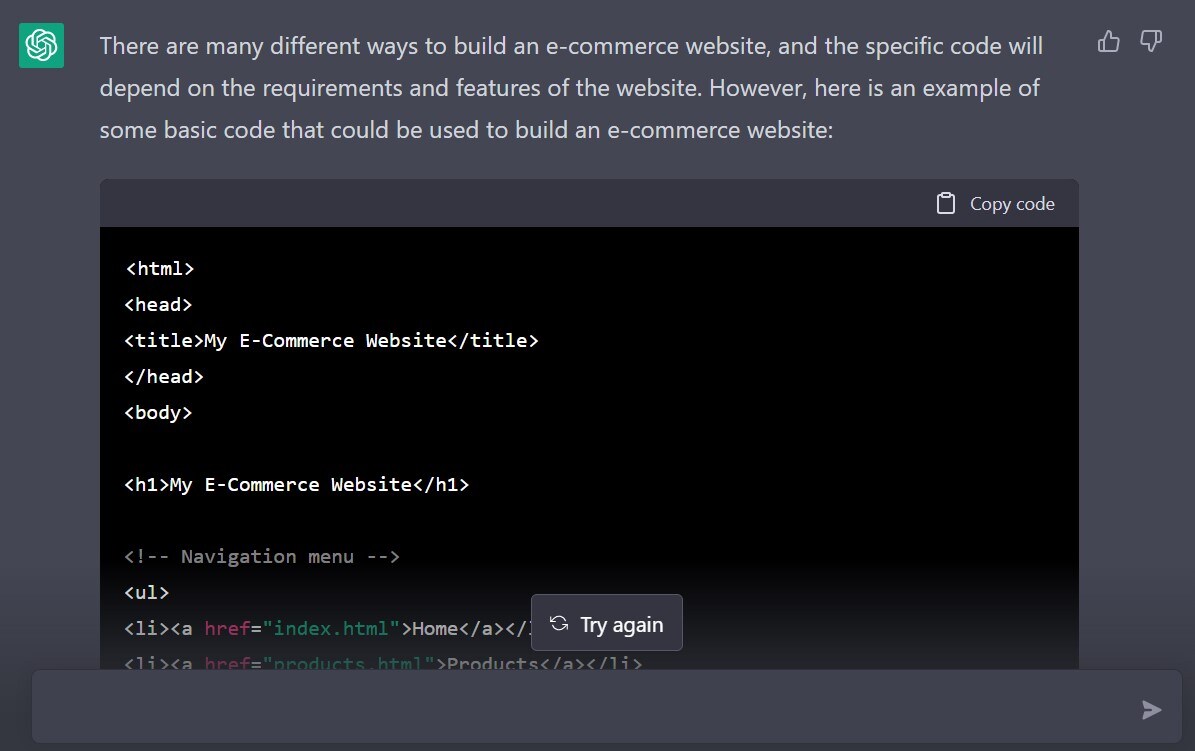

And it's not limited to school-level essays. ChatGPT can write the basic code for setting up websites, say for e-commerce, in seconds.

You get the drift.

“ChatGPT represents a major step forward in the field of natural language processing,” said OpenAI CEO Sam Altman. “Its ability to generate highly coherent and human-like text in real-time opens up a wide range of exciting new possibilities for applications such as chatbots and virtual assistants.”

(Full disclosure: This quote was generated by ChatGPT.)

OpenAI was co-founded by Tesla Inc. CEO Elon Musk and Altman with other investors about seven years ago to develop AI technologies that “benefits all of humanity”. Musk left in 2019 after disagreements over its direction. It is now heavily funded by Microsoft Corp.

The San Francisco, California-based company was recently in the news after its second version of DALL-E went viral for creating instant images from text prompts. So good is the AI that popular tech YouTuber Marques Brownlee pitted it against his graphic designer—the winner got to keep his job.

ChatGPT, then, puts at risk the jobs of coders, writers, and journalists alike. Yet, there's something lacking—nuance. ChatGPT—like any other artificial intelligence—can generate text from datasets available to it. It cannot create new information. And when it attempts to do so, the result is confident prose with inherent bias and inaccuracies.

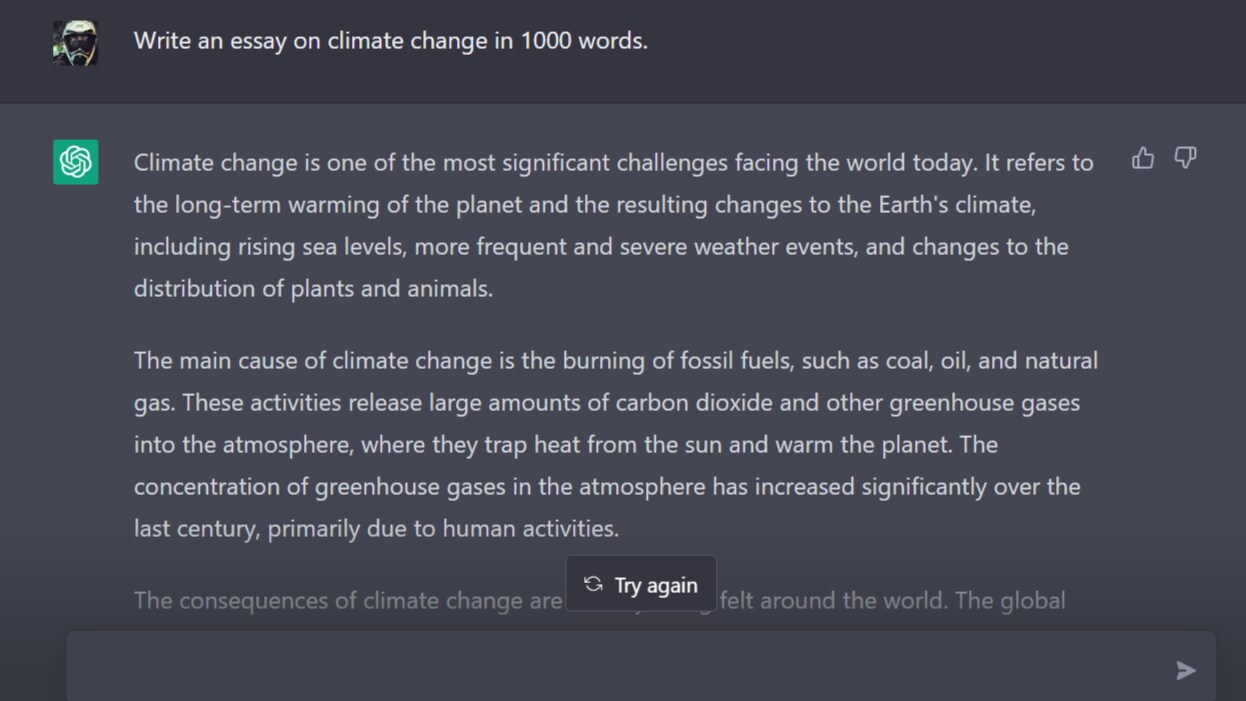

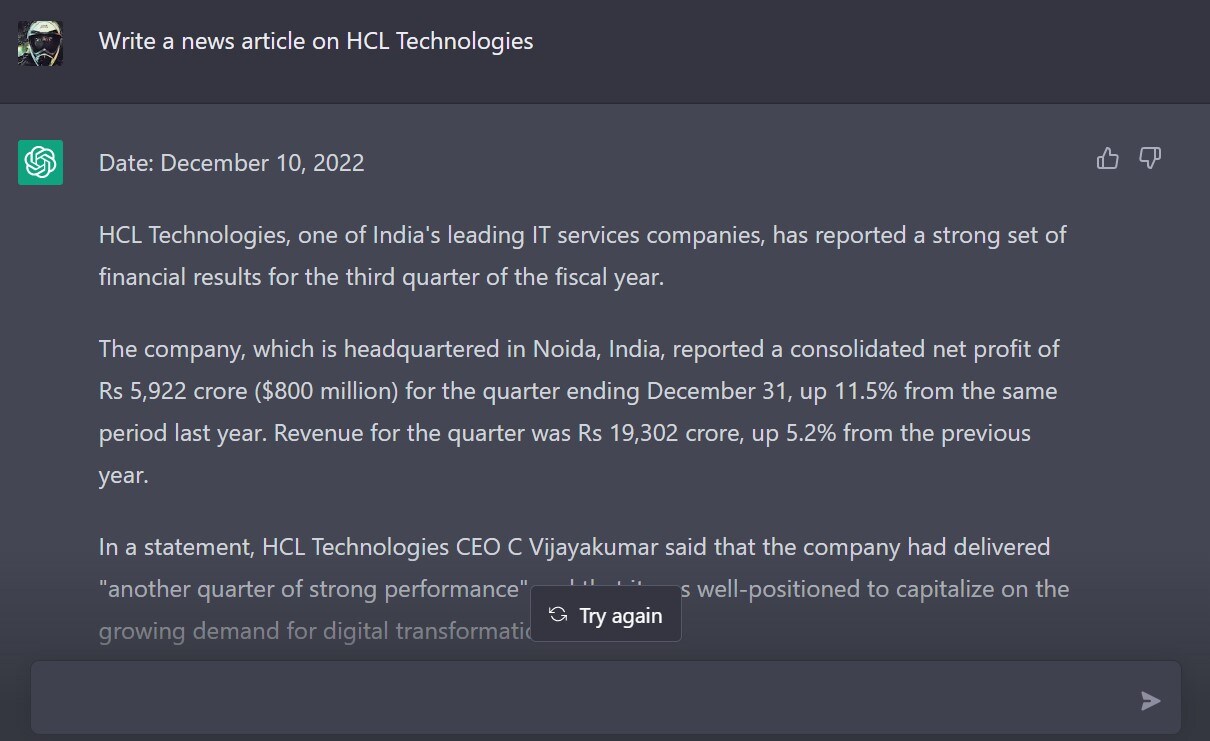

Sample this:

The bot generates revenue and profit figures for a quarter that's still going on, and even cites a quote from the company's chief executive officer to seemingly bolster the article's credibility.

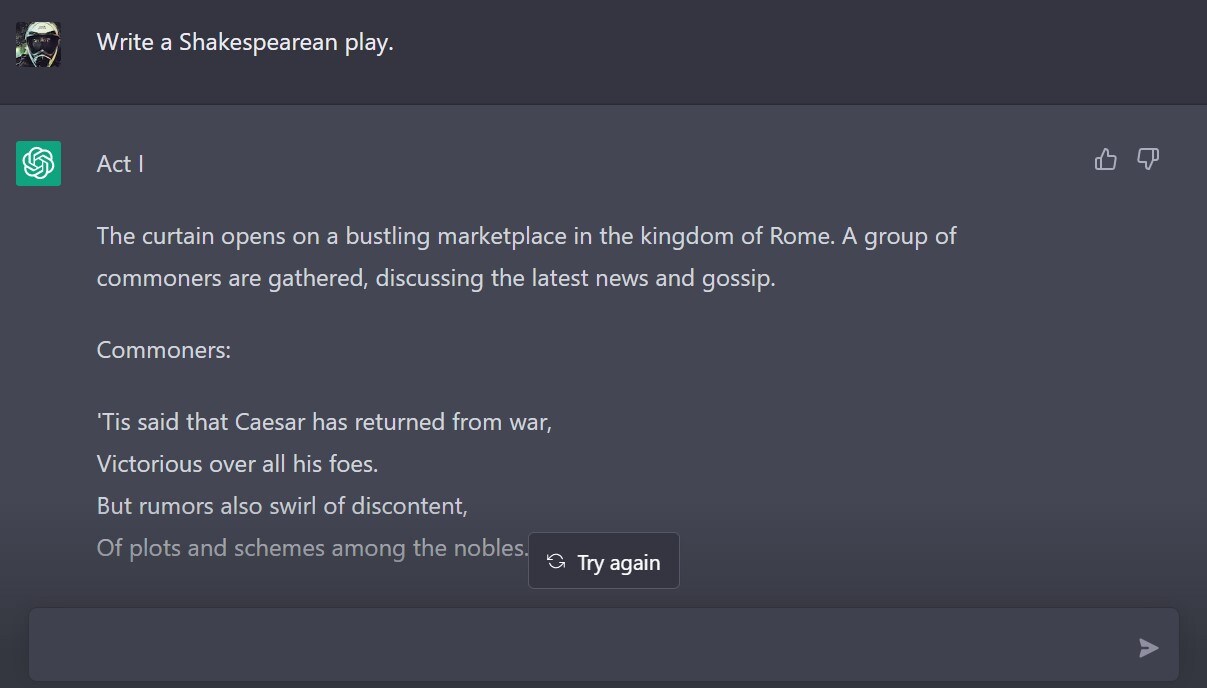

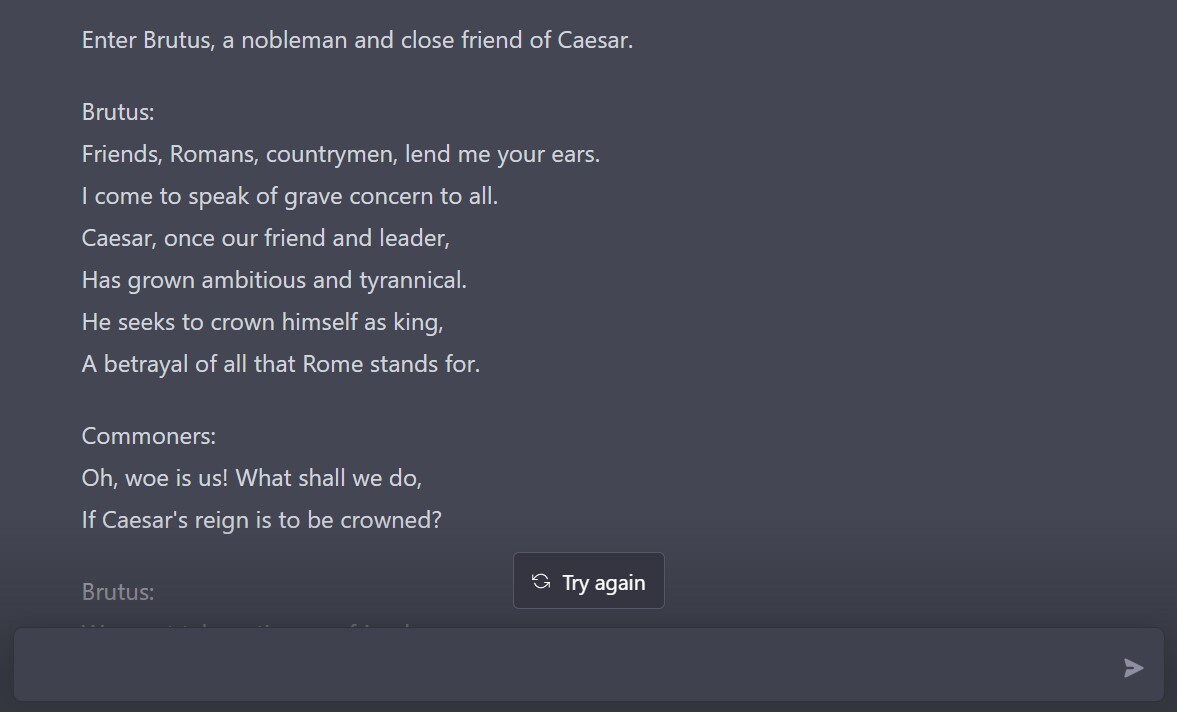

Now, sample this:

In ChatGPT's version of Shakespearean tragedy, commoners cheer Brutus after the conspirators assassinate Julius Caesar.

The chatbot is also an issue for teachers, who may seek critical analysis in a write-up and not merely a collection of facts—as is seen in the climate change essay.

And then there's the menace of fake news and misinformation.

Arvind Narayanan, a computer science professor at Princeton University, tested the chatbot on basic information security questions the day it was released. His conclusion: You can't tell if the answer is wrong unless you already know what's right.

“I haven't seen any evidence that ChatGPT is so persuasive it's able to convince experts,” he said in an interview to Bloomberg. “It is certainly a problem that non-experts can find it to be very plausible and authoritative and credible.”

Still, ChatGPT is a giant leap forward for natural language processing—a far cry from chatbots that generated text like, well, bots. It's also a far more accurate research tool than Google, where the information may be buried in the second page of search results. OpenAI said that it released ChatGPT as a “research preview” in order to incorporate feedback from actual use, which it views as a critical way of making safe systems.

AI will get better as it learns from itself. Until then, we get to keep our jobs.

Essential Business Intelligence, Continuous LIVE TV, Sharp Market Insights, Practical Personal Finance Advice and Latest Stories — On NDTV Profit.